The previous posting on this topic has now been removed but is still available as pdf It was removed because I thought the solution it was exploring was too complex and would not really work very well, if at all!

Following some useful discussions with Comic Relief staff I have worked out a much simpler process, which I will describe below

The problem:

- How do you make summary descriptive statements about the overall performance of a portfolio of activities, if there is no quantitative measure that can be applied to all projects in the portfolio? This kind of problem is likely to be present in projects with complex social development objectives e.g. those relating to accountability, empowerment, governance, etc.

- How to identify the causal factors contributing to an outcome that seems to be unmeasurable because of its complexity? There are methods that can manage causal complexity, such as QCA and Decision Tree modelling which i have discussed elsewhere on this blog, but each of these are only practicable when there is some form of consistent coding of the type of outcomes that have occurred.

The suggested approach to the outcome measurement problem: A multi-dimensional measure (MDM) for a given project = (The scale of achievement of the project specific outcomes) X (a weighting for the relative importance of that package of outcomes associated with a given project)

Project specific outcomes: Both DFID and DFAT (ex-AusAID) use a relatively simple annotated rating scale to assess the likely or actual achievement of a project’s objectives. By themselves these ratings can’t be sensibly aggregated, because the contents of the outcomes being achieved may be quite different. But this type of score can be used as an input to a larger calculation.

Where these rating systems are not in place a project specific rating can be generated through one of more types of pair comparison process. See Postscript 1 below.

Weightings: There are many different ways of developing weightings, some of which I have explored elsewhere. These weight individual aspects of performance then summarize these for each entity having those aspects. For example, the Basic Necessities Survey weights the importance of individual items households may posses, then sums the weights of all the items a household has into an aggregate score.

There is an alternate approach using a variant of the Hierarchical Card Sorting (HCS) process. This identifies clusters of performance attributes, then ranks them. Entities such as projects will have an outcome score that reflects their particular cluster of performance attributes.

- First stage: Participants are asked to sort projects in the portfolio of interest into two piles, to “what they see as the most significant difference in the outcomes being sought by the projects, in the light of the overall objective of the portfolio, as they see it”.

As with normal use of HCS, the same question is then re-iterated with each newly created group of projects to generate sub-groups of projects and then further sub-sub-groups.

The process stops when participants can no longer identify any significant differences, or when there is only one project left in any sub-group.

In facilitating this process care needs to be taken to ensure that participants do not start to report differences in the intervention, as distinct from outcomes. These are relevant to a causal analysis, but not to measurement of outcomes , which is the focus here.

The results from this first stage will be a nested classification in the form of a tree with various branches, each representing one or more projects pursuing a particular set of outcomes, as described by the multiple distinctions made at each point in the branch.

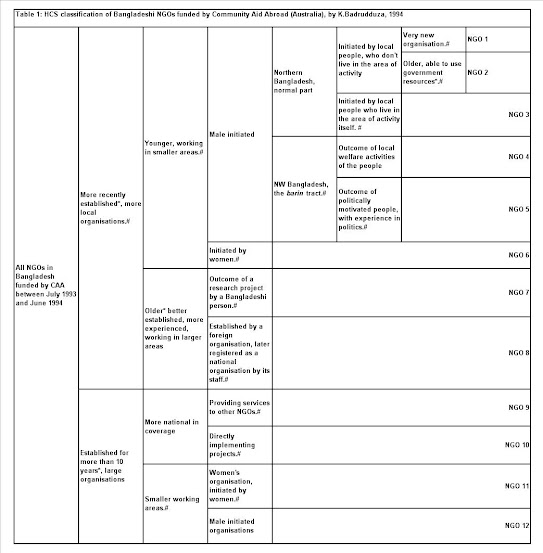

Here is an example of a hierarchical card sorting of projects funded in Bangladesh by an Australian NGO in the early 1990s [Caveat: It was developed way before the idea for this blog posting emerged, but it gives an idea of the type of tree structure that can be produced using a Hierarchical Card Sort. It is more focused on means rather than ends, so please bear this in mind.]

- Second stage: Participants are then asked to make choices at each branching point in the tree, starting from the base of the tree. They are asked to identify which type of outcome (represented by the two diverging branches) they think it is more important for the portfolio owner to be seeking to achieve. When this question is re-iterated down all branches of the tree this will enable a complete ranking of outcome configurations (branches) to be identified.

Score construction: A simple table would then be generated in Excel where rows = projects and columns detailed (a) project specific ratings, (b) outcome weightings (i.e. the ranking of the branch that the project belonged to), (c) the product of the rating and weighting values.

Next: Now I need some real life examples, to show how this works in practice…and/or to discover the practical difficulties of using this approach. Any offers?

Postscript 1: Generating project ratings from pair comparisons. In my earlier version of this blog I explored the potential of a pair comparison method as a means of coming up with an overall ranking of project outcomes in a portfolio. The downside of this, as pointed out by Tom Thomas reflecting on PRA experiences, was that pair comparisons can be very time consuming and the time cost rises exponentially as the number of entities being compared increases.The number of pair comparaiosn = N to the power of N.

In the process of exploring this approach I ended up reading some of the literature on sorting algorithms. Processing cost (i.e. time taken to make comparisons of items) is one of the criteria that is used to assess the value of a sorting algorithm. Not surprisingly perhaps there is a huge variety of sorting algorithms. One which I have developed is described in this short Word file (NB: It was probably already developed by someone else many years ago!)

More recently still (April 2015), I have just finished reading Computational Fairy Tales by Jeremy Kubica, which I recommend to beginners in this area (such as me). In that book the author describes something called a QuickSort sorting algorithm, which sounds very useful for minimising the number of pair comparison needed to generate a complete ranking of a set of cases of interest. On average it works well, but in the worst case it can require N to the power of No comparisons. But this worst case wont apply when humans are doing the sorting because they can pick what are called "pivot" cases more purposively, whereas the computerised algorithm uses random choices. Good human choices of pivot cases with approximate median values should mean the sorting process is as quick as it can be with this type of algorithm.